Integrating AI models with external data sources has historically required fragmented connectors, custom APIs, and repeated engineering effort. This model does not scale well as systems grow in complexity.

I’m writing this for AI engineers, product architects, and technical leaders who are evaluating how to standardize context flow between models and external systems without reinventing integration layers repeatedly.

To address these structural inefficiencies, Anthropic introduced the Model Context Protocol (MCP), an open-source standard designed to formalize how AI systems connect to tools, data sources, and runtime environments.

What is MCP (Model Context Protocol)?

MCP functions as a standardized interface that enables AI tools to interact consistently with content repositories, business platforms, and development environments. By providing a standardized framework, MCP enhances the relevance and context-awareness of AI applications and is distinct from Artificial general intelligence, which targets broad human-level capability.

The protocol reduces the need for dataset-specific connectors by introducing a consistent integration layer across AI systems and external data sources.

General Architecture of MCP

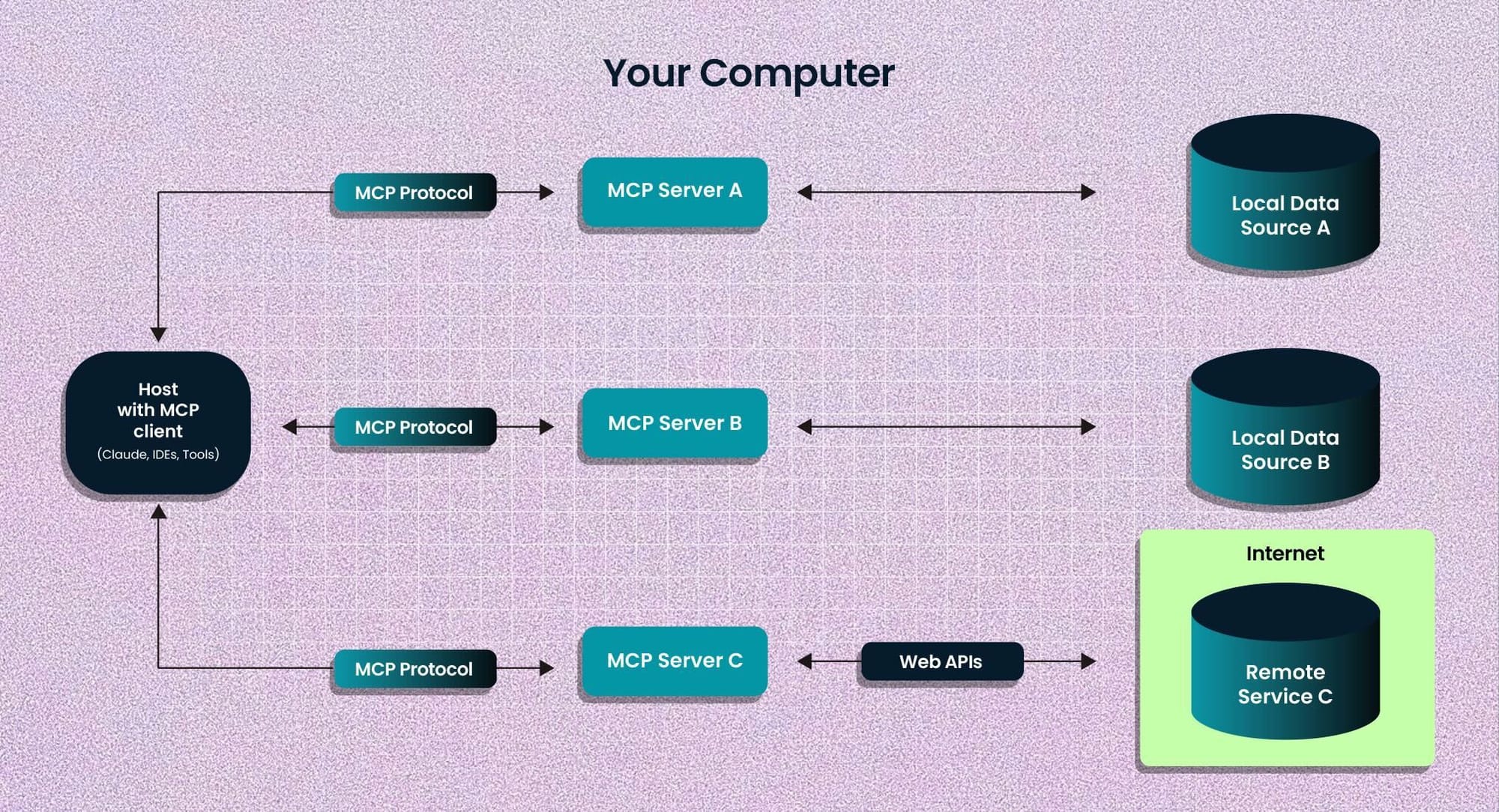

Architecturally, MCP follows a client-server model where a host application orchestrates connections to multiple context servers:

- Hosts: These are LLM applications (like Claude Desktop or Integrated Development Environments) that initiate connections. The host process acts as the container and coordinator, managing multiple client instances, controlling client connection permissions and lifecycle, enforcing security policies, handling user authorization decisions, coordinating AI/LLM integration and sampling, and managing context aggregation across clients.

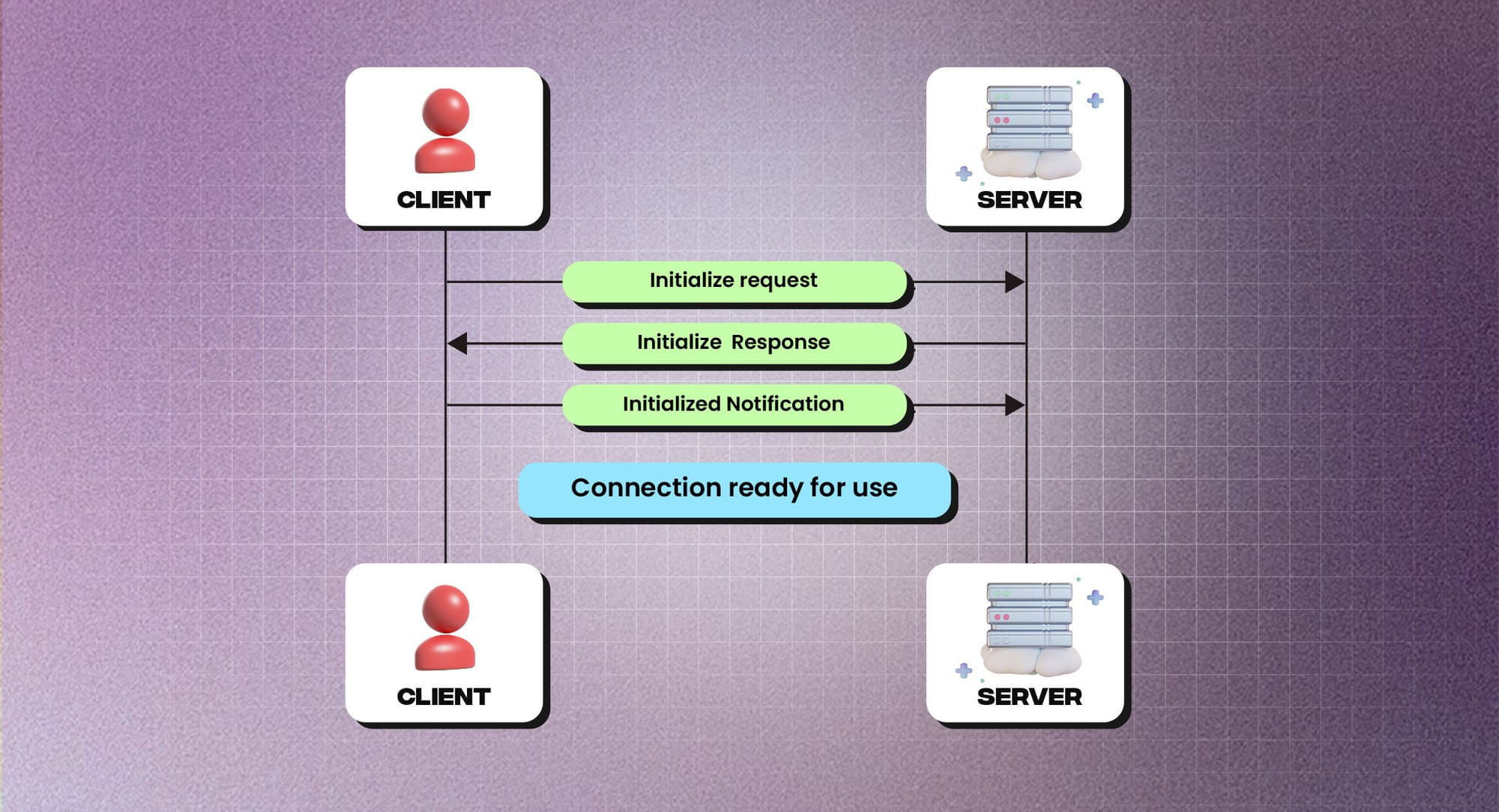

- Clients: Each client is created by the host and maintains an isolated server connection. Clients establish one stateful session per server, handle protocol negotiation and capability exchange, route protocol messages bidirectionally, manage subscriptions and notifications, and maintain security boundaries between servers.

- Servers: Servers provide specialized context and capabilities. They expose resources, tools, and prompts via MCP primitives, operate independently with focused responsibilities, and request sampling through client interfaces.

- Local Data Sources: Your computer’s files, databases, and services that MCP servers can securely access

- Remote Services: External systems available over the internet (e.g., through APIs) that MCP servers can connect to

Core Components of MCP (Model Context Protocol)

- Protocol Layer: The protocol layer handles message framing, request/response linking, and high-level communication patterns. Key classes include

- Protocol

- Client

- Server

- Transport Layer: The transport layer handles the actual communication between clients and servers. All transports use JSON-RPC 2.0 to exchange messages. MCP supports multiple transport mechanisms:

- Stdio transport

- HTTP with SSE transport

Message Types of MCP

MCP defines the following core message types:

- Requests expect a response from the other side

- Results are successful responses to requests

- Errors indicate that a request failed

- Notifications are one-way messages that don’t expect a response

Resources in Model Context Protocol

Resources represent a foundational primitive in MCP, enabling structured exposure of contextual data to clients, which allows servers to expose data and content that can be read by clients and used as context for LLM interactions.

- File contents

- Database records

- API responses

- Live system data

- Screenshots and images

- Log files

- And more

Walk away with actionable insights on AI adoption.

Limited seats available!

Each resource is identified by a unique URI and can contain either text or binary data.

Benefits of Using the Model Context Protocol (MCP)

Adopting MCP introduces structural advantages that improve integration discipline, security posture, and system composability: that streamline development processes, enhance system architecture, and bolster security measures.

1. Simplified Integration Processes

- Standardized Connectivity: MCP introduces a uniform protocol layer for AI-to-data interaction, reducing integration variability and eliminating the need for custom integrations for each dataset. This standardization reduces development time and complexity.

- Unified Development Approach: Developers can implement MCP once and seamlessly connect to multiple data sources, streamlining the integration process and reducing redundancy.

2. Enhanced Composability and Modularity

- Component-Based Architecture: MCP's design promotes a modular approach, allowing developers to build applications with interchangeable components. This enhances flexibility and scalability in system design.

- Interoperability: By adhering to open standards, MCP ensures that various AI applications and tools can work together seamlessly, fostering a cohesive ecosystem.

3. Improved Security and Data Isolation

- Granular Access Controls: MCP incorporates detailed access control mechanisms, allowing for precise management of permissions and enhancing data security.

- Data Segmentation: The protocol's architecture supports data isolation, ensuring that sensitive information is compartmentalized and protected from unauthorized access.

4. Accelerated Development and Maintenance

- Reduced Redundancy: With MCP's standardized approach, developers no longer need to create custom connectors for each data source, significantly reducing repetitive coding tasks.

- Easier Maintenance: A unified protocol simplifies the maintenance process, as updates or changes can be applied universally rather than individually to each integration.

5. Future-Proofing and Scalability

- Adaptability: MCP's flexible framework allows for easy adaptation to emerging technologies and data sources, ensuring long-term viability.

- Scalable Integrations: The protocol supports scalable architectures, enabling systems to grow and integrate additional functionalities without significant overhauls.

6. Enhanced Performance and Efficiency

- Direct Data Access: Structured client-server communication minimizes latency introduced by redundant middleware layers and improves response times, leading to more efficient operations, and approaches like STDIO transport in MCP further support streamlined communication between clients and servers.

- Optimized Resource Utilization: Standardized integrations allow for better resource management, optimizing system performance and reducing overhead.

Real-World Applications and Adoption of MCP

MCP has gained rapid adoption across industries due to its architectural clarity and interoperability model, demonstrating its versatility and effectiveness in enhancing AI capabilities. Below is an exploration of its real-world applications and the extent of its adoption:

1. Industry Adoption

- Major Tech Companies: Several prominent technology firms have integrated MCP into their platforms, showcasing its practical benefits. This widespread adoption underscores MCP's reliability and effectiveness in real-world scenarios.

- Coding Platforms: Platforms such as Replit, Codeium, and Sourcegraph have adopted MCP to enhance their AI agents, enabling these tools to perform tasks on behalf of users with greater efficiency and accuracy.

- Enterprise Integration: Companies like Goldman Sachs and AT&T have utilized AI models compatible with protocols like MCP to streamline various business functions, including customer service and code generation.

2. Community Engagement

- Open-Source Contributions: The open-source nature of MCP has fostered a vibrant developer community, leading to continuous enhancements and a growing repository of tools and integrations. This collaborative environment accelerates innovation and broadens MCP's applicability.

- Educational Resources: The community has generated extensive documentation, tutorials, and best practices, facilitating easier adoption and implementation of MCP across various projects.

3. Diverse Applications

- AI Assistants: MCP enables AI assistants to access and interact with external data sources seamlessly, improving their ability to provide accurate and contextually relevant responses. In certain scenarios, developers even compare these setups against Small language models when looking for lightweight, faster-to-deploy alternatives that still deliver strong contextual performance.

- Development Tools: Integrated Development Environments (IDEs) and other development tools leverage MCP to offer AI-driven code suggestions, debugging assistance, and project management features, enhancing developer productivity.

Frequently Asked Questions

1. What problem does Model Context Protocol (MCP) solve?

MCP standardizes how AI models connect to external tools and data sources, eliminating fragmented custom integrations.

Walk away with actionable insights on AI adoption.

Limited seats available!

2. How does MCP differ from traditional API integrations?

Unlike isolated APIs, MCP introduces a unified protocol layer that manages structured context exchange across systems.

3. Is MCP only for large language models?

MCP is primarily used with LLM-based systems but can support any AI workflow requiring structured context integration.

4. What architecture does MCP use?

MCP uses a client-server architecture with host applications, clients, and specialized context servers.

5. How does MCP improve AI security?

MCP enforces isolated sessions, granular permissions, and controlled resource exposure through structured communication.

6. Why is MCP important in 2026?

As AI systems increasingly rely on tool integration and external context, standardized protocols like MCP enable scalable and maintainable architectures.

Conclusion

The Model Context Protocol (MCP) represents a structural advancement in how AI systems interface with external tools and data sources.

By formalizing context exchange through a standardized protocol, MCP reduces integration fragmentation and supports scalable AI architectures.

As AI systems evolve toward tool-augmented workflows, protocols like MCP are becoming foundational to secure, modular, and future-ready AI infrastructure. By establishing a standardized, secure, and efficient framework, MCP addresses the complexities and inefficiencies associated with fragmented data access and custom integrations. Its adoption facilitates seamless connectivity, enhancing the relevance and accuracy of AI applications across various domains.

Looking ahead, MCP's evolution is set to drive significant transformations in AI development:

- Sustainable AI Architectures: As MCP matures, it will enable AI systems to maintain context across diverse tools and datasets, fostering more sustainable and scalable AI architectures.

- Widespread Adoption: The open-source nature of MCP encourages widespread adoption, leading to a unified approach in AI integrations and reducing redundancy in development efforts.

- Continuous Innovation: With a growing community contributing to its development, MCP is poised to incorporate cutting-edge features that address emerging challenges in AI, such as real-time decision-making and ethical considerations.

In essence, MCP not only simplifies the integration process but also paves the way for more intelligent, secure, and ethical AI applications. Its role in shaping the future of AI underscores its significance as a foundational protocol in the ongoing evolution of artificial intelligence.

Walk away with actionable insights on AI adoption.

Limited seats available!