The rapid evolution of AI development frameworks has significantly changed how developers build applications powered by large language models. When choosing a framework for LLM-based systems, LangChain vs. LlamaIndex often becomes an important architectural decision.

While working with LLM-powered systems, I noticed that many developers struggle to decide which framework better fits their project requirements, especially when building applications using ecosystems like Transformers, vLLM, or SGLang.

Although both tools operate in the same AI development landscape, their strengths lie in different areas. This guide explains the core capabilities, use cases, and architectural differences between LangChain and LlamaIndex so developers can choose the framework that aligns best with their AI application goals.

What is LangChain?

LangChain is a framework designed for building applications powered by large language models (LLMs). It focuses on enabling developers to construct complex AI workflows using modular components and integrations.

The framework is particularly well suited for tasks involving generative AI pipelines, retrieval-augmented generation (RAG), and multi-step reasoning workflows.

LangChain supports the entire lifecycle of LLM applications by providing tools that simplify development, monitoring, and deployment. This modular architecture allows developers to design sophisticated AI systems while maintaining flexibility in model selection and integrations.

LangChain simplifies multiple stages of the LLM application lifecycle.

Development:

Developers can build applications using LangChain’s open-source components, integrations, and workflow tools. LangGraph allows the creation of stateful AI agents with streaming and human-in-the-loop capabilities.

Productionization:

LangSmith provides visibility into AI chains by enabling inspection, monitoring, and evaluation of workflows. This helps teams optimize system performance and reliability before large-scale deployment.

Deployment:

LangGraph Cloud enables developers to convert LangGraph workflows into production-ready APIs and AI assistants, making deployment and scaling significantly easier.

What is LlamaIndex?

LlamaIndex is a framework designed to build context-augmented AI applications powered by large language models.

The framework focuses on data indexing, retrieval, and efficient interaction with structured and unstructured datasets. This makes it particularly useful when AI applications must query or analyze large volumes of information.

LlamaIndex simplifies the process of connecting LLMs with multiple data sources, enabling applications to retrieve relevant context efficiently before generating responses.

Because of this design, it is widely used for knowledge retrieval systems, document intelligence platforms, and data-driven AI assistants. Primarily focuses on data indexing, retrieval, and efficient interaction with LLMs. It simplifies the process of integrating LLMs with structured and unstructured data sources for seamless querying and data augmentation.

Walk away with actionable insights on AI adoption.

Limited seats available!

Advanced Use Cases and Strengths

When evaluating LangChain vs. LlamaIndex, understanding their advanced capabilities is important because each framework addresses different layers of the AI development stack.

LangChain primarily focuses on workflow orchestration, agent design, and generative AI pipelines, while LlamaIndex focuses on data retrieval and knowledge indexing.

Understanding these strengths helps developers choose the right framework depending on whether their application requires complex AI workflows or efficient data retrieval systems.

Use Cases and Strengths of LangChain

- Multi-Model Integration

LangChain supports multiple model providers such as OpenAI, Hugging Face, and other APIs. This flexibility allows developers to build AI systems that combine different model capabilities. - Chaining Workflows

LangChain enables sequential and parallel workflows with memory augmentation. This makes it well suited for conversational agents, automation pipelines, and multi-step reasoning systems. - Generative Tasks

The framework excels at generative AI use cases including text generation, summarization, translation, and code generation. - Observability

LangSmith enables monitoring, debugging, and evaluation of AI workflows, providing deeper visibility into how LLM chains perform in production environments.

Use Cases and Strengths of LlamaIndex

- Indexing and Search

LlamaIndex specializes in organizing and retrieving large datasets. It supports domain-specific embeddings to improve the accuracy of AI-driven retrieval. - Structured Queries

Tools such asRetrieverQueryEngineandSimpleDirectoryReaderallow developers to query structured and unstructured data efficiently. - Interactive Engines

Components likeContextChatEngineenable interactive querying of stored knowledge, making it suitable for Q&A systems and knowledge assistants. - Vector Store Integration

LlamaIndex integrates seamlessly with vector databases such as Pinecone and Milvus, enabling scalable semantic search and retrieval.

Decision Factors To Consider

- Workflow Complexity

Applications that involve multi-step logic, agent workflows, and memory management benefit from LangChain’s orchestration capabilities. - Search and Retrieval Systems

Applications focused on document indexing, semantic search, and knowledge retrieval typically benefit from LlamaIndex. - Budget and Cost Considerations

LangChain may be more cost-efficient when embedding large datasets, while LlamaIndex is optimized for systems handling frequent retrieval queries. - Lifecycle Management

LangChain provides stronger control over lifecycle management tasks such as debugging, monitoring, and evaluating AI workflows.

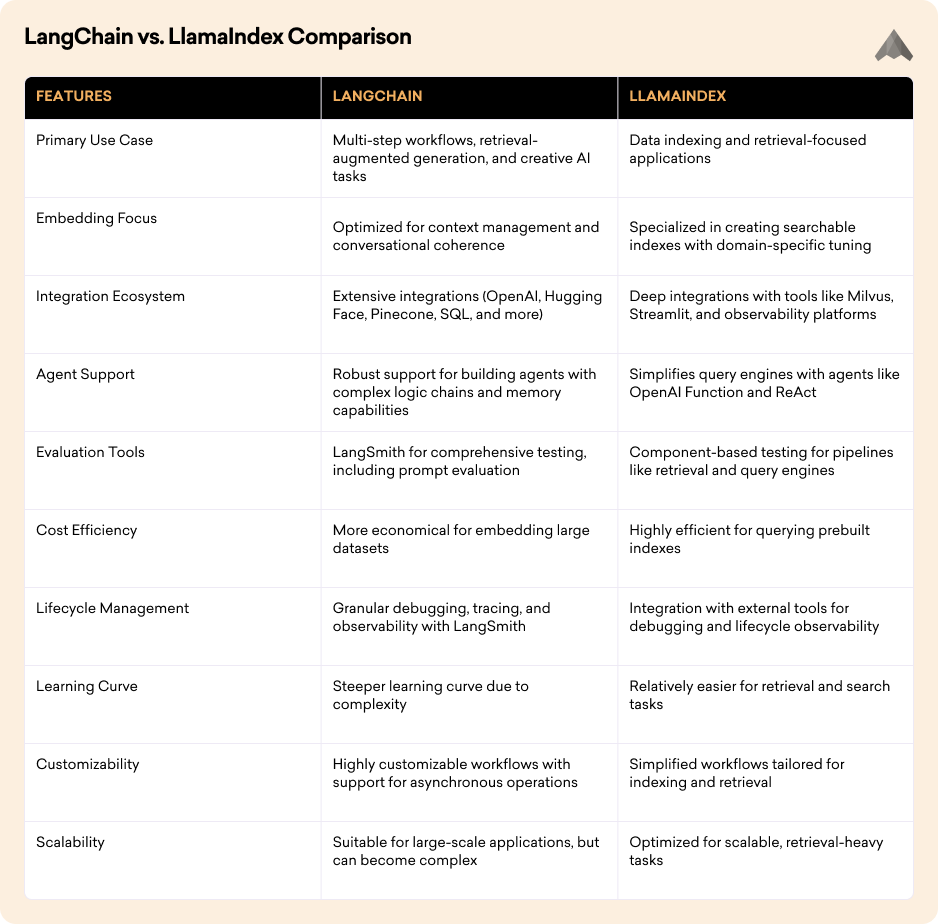

LangChain vs. LlamaIndex Comparison

To make an informed choice in the LangChain vs. LlamaIndex debate, let's examine their key features side by side:

Can LlamaIndex and LangChain Work Together?

Yes. Many AI systems combine LangChain and LlamaIndex to leverage the strengths of both frameworks.

In this architecture, LlamaIndex typically manages data indexing and retrieval, while LangChain handles agent workflows, reasoning pipelines, and generative tasks.

This hybrid approach enables developers to build AI applications that combine efficient knowledge retrieval with advanced LLM orchestration.

FAQ

What is the difference between LangChain and LlamaIndex?

LangChain focuses on building LLM workflows, agents, and generative AI pipelines, while LlamaIndex specializes in data indexing, retrieval, and knowledge querying for LLM applications.

Walk away with actionable insights on AI adoption.

Limited seats available!

When should developers use LangChain?

LangChain is ideal for applications that require multi-step reasoning, AI agents, conversational workflows, and generative AI tasks.

When should developers use LlamaIndex?

LlamaIndex works best when applications require efficient document retrieval, knowledge indexing, and structured data interaction with LLMs.

Can LangChain and LlamaIndex be used together?

Yes. Many AI architectures use LlamaIndex for data retrieval and LangChain for workflow orchestration and generation tasks.

Which framework is better for RAG systems?

Both frameworks can support retrieval-augmented generation (RAG). LlamaIndex handles data retrieval efficiently, while LangChain manages generation pipelines and AI workflows.

Our Final Words

When evaluating LangChain vs. LlamaIndex, both frameworks serve distinct but complementary roles within the AI development ecosystem.

LangChain excels in workflow orchestration and generative AI pipelines, while LlamaIndex focuses on data indexing and efficient knowledge retrieval.

Choosing the right framework depends on the architecture, scale, and data requirements of your AI application.

In many production scenarios, combining both frameworks can provide the most effective approach to building scalable, intelligent AI systems.

Walk away with actionable insights on AI adoption.

Limited seats available!