The tech world is buzzing with terms like AI, ML, and Deep Learning, and I wrote this guide because these concepts are often used interchangeably, even though they solve very different problems.

In this article, I break down the practical difference between AI, ML, and Deep Learning, focusing on what each is best suited for, where they overlap, and why the distinction matters when making real-world technology decisions.

If you’re trying to understand AI, ML, and Deep Learning and how these technologies actually fit together, this guide will help you see the full picture clearly.

What is Artificial Intelligence (AI)?

Definition: Artificial Intelligence (AI) is the broadest layer in this stack. It refers to systems designed to perform tasks that typically require human intelligence, such as reasoning, decision-making, and language understanding.

AI acts as the umbrella under which techniques like Machine Learning and Deep Learning operate, making it a strategic concept rather than a single technology. This includes reasoning, learning, problem-solving, perception, and language understanding.

Example: Think of AI as a smart assistant that can play chess, recommend movies, or even drive a car. It encompasses various technologies and approaches.

Advantages

- Versatility: AI frameworks adapt across industries and problem types.

- Efficiency: AI systems accelerate large-scale decision-making.

Disadvantages

- Complexity: Designing intelligent systems requires significant expertise.

- Ethical Concerns: AI adoption raises governance and accountability challenges.

Next Up: Now that we understand AI, let’s dive into Machine Learning, a subset of AI.

What is Machine Learning (ML)?

Definition: Machine Learning (ML) is a subset of AI focused on systems that learn patterns from data instead of relying on explicitly programmed rules.

ML is most effective when decisions need to improve continuously based on historical or real-time data, making it ideal for prediction, classification, and recommendation systems and make predictions based on data. Instead of being explicitly programmed for every task, ML systems learn from experience.

Example: Imagine a spam filter in your email. It learns from the emails you mark as spam and gradually improves its ability to filter out unwanted messages.

Advantages

- Adaptability: Models improve as new data becomes available.

- Automation: ML reduces manual decision logic.

Walk away with actionable insights on AI adoption.

Limited seats available!

Disadvantages

- Data Dependency: Model quality depends heavily on data quality.

- Limited Explainability: Some models lack transparent reasoning.

Next Up: With ML in mind, let’s explore Deep Learning, which is a more advanced form of ML.

Suggested Reads - What is Retrieval-Augmented Generation (RAG)?

3. What is Deep Learning (DL)?

Definition: Deep Learning (DL) is a specialized form of Machine Learning that uses multi-layered neural networks to model complex patterns in large datasets.

DL excels when raw, unstructured data such as images, audio, or text, must be interpreted without manual feature engineering (hence "deep") to analyse various factors of data. It mimics the way the human brain works, allowing for more complex data processing.

Example: Think of facial recognition technology. DL algorithms can identify and verify faces in photos by analyzing patterns in pixel data. Beyond images, deep learning also powers voice-driven apps when paired with modern text-to-speech TTS solutions, making natural human-computer interaction possible.

Advantages

- High Accuracy: Excels in vision, speech, and language tasks.

- Automatic Feature Learning: Reduces manual data preparation.

Disadvantages

- Resource Intensive: Requires powerful compute and large datasets.

- Lower Interpretability: Decision logic is harder to explain.

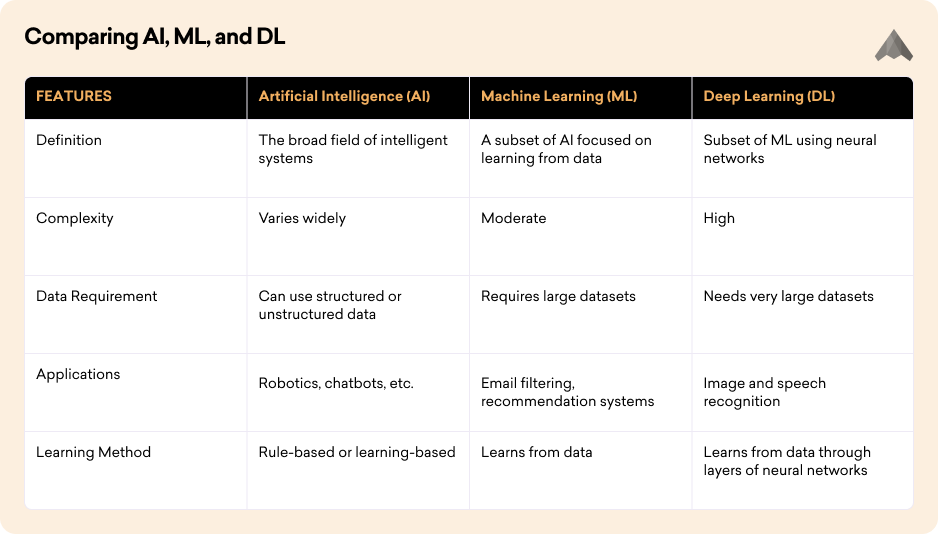

Now that we’ve covered the basics of AI, ML, and Deep Learning, let’s compare them directly.

Comparing AI, ML, and DL

When comparing these technologies, it’s also worth noting how advances in deep learning have led to breakthroughs like the large language model, which uses massive neural networks trained on vast datasets to understand and generate human-like text.

Suggested Reads- How To Use Open Source LLMs (Large Language Model)?

Our Final words

AI represents the goal of intelligent behaviour, ML defines how systems learn from data, and DL specifies how complex learning happens at scale.

Understanding this hierarchy helps teams choose the right level of sophistication without overengineering solutions. ML is a method within AI that allows systems to learn from data, while DL is a more advanced method that uses neural networks to learn from large amounts of data. AI, ML, and Deep Learning are not competing technologies, they are layers of capability. Choosing the right approach depends on the problem, data availability, and performance requirements.

Clarity on the difference between AI, ML, and Deep Learning enables smarter adoption decisions and more sustainable AI systems.

As we move forward, the integration of AI, ML, and DL will continue to shape the future of technology, offering exciting possibilities and challenges. For developers building in this space, modern AI code editors can further accelerate productivity, simplify workflows, and make experimenting with these technologies more efficient.

Frequently Asked Questions

1. What is the difference between AI, ML, and Deep Learning?

AI is the broad concept of machines performing intelligent tasks. Machine Learning is a subset of AI that learns from data, while Deep Learning is a subset of ML that uses neural networks to solve complex problems.

Walk away with actionable insights on AI adoption.

Limited seats available!

2. Is Machine Learning the same as Artificial Intelligence?

No. Machine Learning is one method used within AI. AI includes many approaches beyond ML, such as rule-based systems, reasoning engines, and automation.

3. Why is Deep Learning considered advanced?

Deep Learning uses multi-layered neural networks that can automatically learn patterns from large amounts of data, making it powerful for images, speech, and natural language tasks.

4. Where is AI used in real life?

AI is used in virtual assistants, recommendation engines, fraud detection, healthcare diagnostics, autonomous vehicles, and customer support systems.

5. When should I use Machine Learning instead of Deep Learning?

Machine Learning is often better when datasets are smaller, interpretability matters, or computing resources are limited.

6. Does Deep Learning always perform better than Machine Learning?

Not always. Deep Learning can outperform traditional ML on large unstructured datasets, but simpler ML models may work better for smaller or structured datasets.

7. Can AI work without Machine Learning?

Yes. Many AI systems use rules, logic, search algorithms, or automation without relying on Machine Learning.

8. Why is it important to understand AI, ML, and Deep Learning separately?

Understanding the difference helps businesses and developers choose the right technology, avoid overengineering, and make smarter implementation decisions.

Walk away with actionable insights on AI adoption.

Limited seats available!