Redirection Loops: A Beginner’s Guide

Redirect loops are one of the most common yet overlooked technical SEO issues that silently damage crawlability, performance, and user experience.

I’m writing this guide for developers, SEO specialists, and website owners who want clarity on how redirect loops occur and how to prevent them systematically.

In this guide, you’ll understand what redirects are, how redirect loops are created, how they affect SEO, and the practical steps required to diagnose and fix them effectively.

Redirections 101!

What are Redirections?

Redirections are HTTP-based forwarding mechanisms that instruct browsers and search engines to request a different URL than originally entered. Properly implemented redirects preserve link equity, prevent duplicate content, and maintain search engine index accuracy.

When implemented, they guide visitors to the right content even if they use an outdated URL. For search engines, effective redirects ensure the proper pages get indexe,d safeguarding the site's rankings and avoiding problems like duplicate content.

Types of Redirections?

The most commonly implemented redirects in production environments are 301 (permanent) and 302 (temporary) status codes, but the ones you'll run into most often are 301 (permanent) and 302 (temporary) redirects.

301 Redirect: This signals to search engines and web browsers that the page has moved to a new URL for good. It's the go-to method for redirecting because it passes on the original page's SEO ranking and link value to the new page.

302 Redirect: This shows that the move isn't forever, and the original URL might come back into play down the road. Search engines don't transfer SEO value in this case seeing the redirect as a short-term detour.

Basic Redirection Status Codes

301 - Permanently Moved

Purpose: This tells us the resource you want has a new permanent address at a different URL.

Effect: It sends both people and search engines to the new URL. Search engines update their records so the new spot will show up in future searches. The SEO value will keep going as it moves from the old URL to the new one.

302 - Found (Temporarily Moved)

Purpose: To send the request for a short time to a different URL.

Let’s Make Your Website Faster and Error-Free

F22 Labs ensures your website runs smoothly by detecting and repairing redirection issues before they affect users.

Effect: People will go to the new URL but search engines will keep the original one in their records. Link value won't pass to the short-term one.

307 - Temporary Redirect

Purpose: Works like 302, but it tells the client to make the same request to the same URL using the original method (Ex. POST to POST).

Effect: Search engines maintain the initial URL in their database, and no link value shifts to the new URL.

308 - Permanent Redirect

Purpose: Similar to a 301 redirect, the resource has relocated, and the request method should stay the same.

Effect: It signals search engines to revise their database and transfer link value to the new URL.

Suggested Reads- Boost Site Engagement with Dynamic Open Graph Images

How Do Redirects Affect SEO?

Redirect configuration directly influences crawl efficiency, link equity transfer, and index consolidation. They keep link value, make sure search engines index the right URLs, and stop duplicate content problems. When you set up 301 redirects the right way, they move most of the old page's ranking power to the new URL. This helps keep search engine rankings steady. They also make things better for users by taking them to the content they want without errors.

But if you don't handle redirects well, like having long chains or loops, it can weaken link value, mess with how well search engines crawl your site, and slow down page loading. A good redirect plan makes sure both people and search engines end up with the right content, which helps your overall SEO.

Loops ➰

How are loops created?

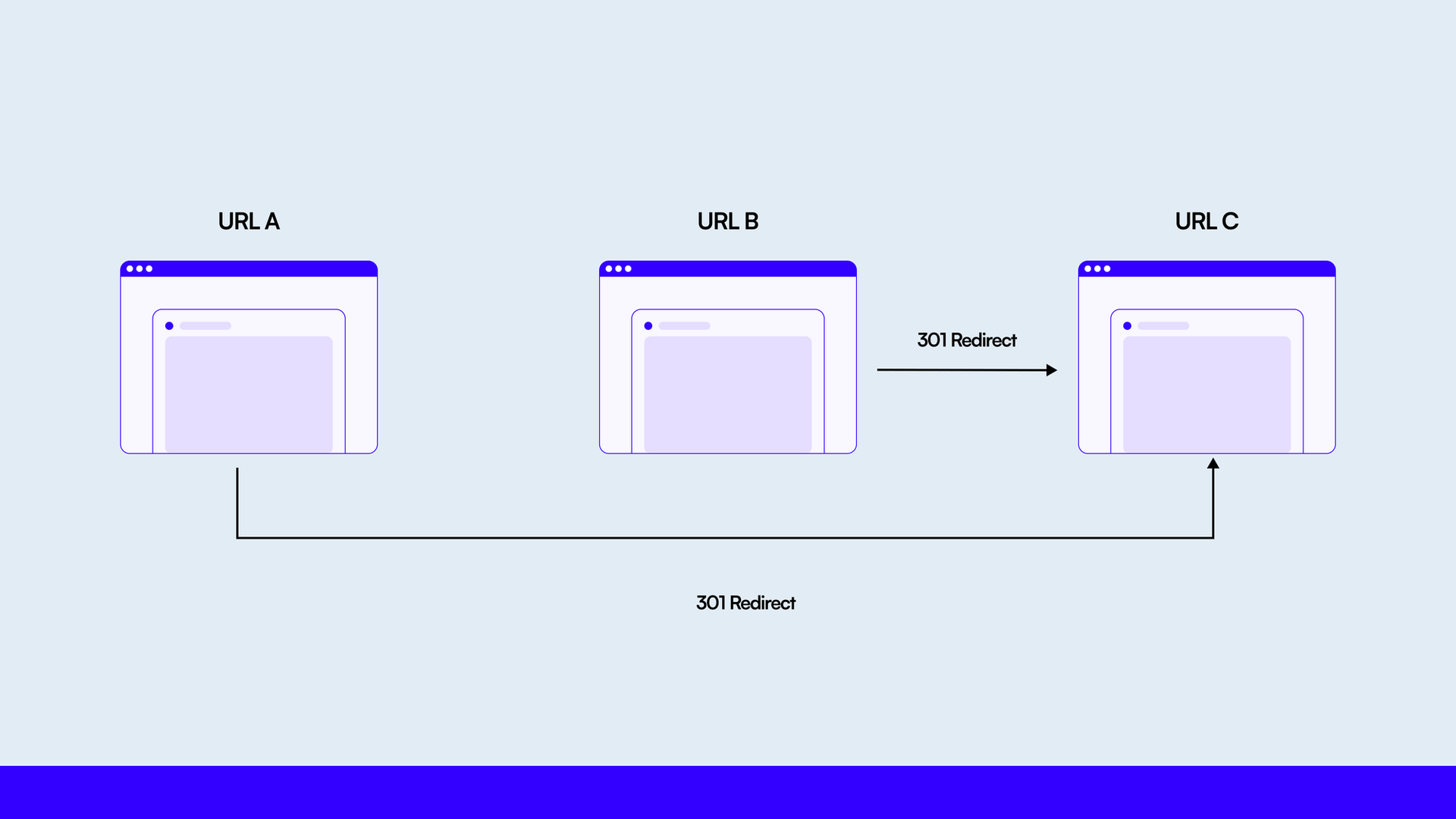

A redirect loop occurs when multiple redirect rules create a circular reference, causing a URL to repeatedly redirect without resolving to a final destination. causing a URL to send visitors back to itself or to another URL that leads back to the original one. This creates an endless cycle that keeps the page from loading or being indexed.

The most common reasons for redirect loops are setting up conflicting redirect rules that create circular paths, and long chains of redirects that end up where they started. Changing redirect paths can also cause these loops.

Solutions to loops?

Fixing redirect loops requires a structured audit of all redirect rules to identify circular paths, redundant chains, and conflicting server-level configurations to find and correct potential problems. Keep your redirect configuration simpler by uniting and minimizing the number of installed redirects.

The approach chart gives a straight line from old web addresses to new ones. Be sure that each redirect rule is correct and will not create rogue loops. Redirect Revealer Tools make more sense, and help you deal with your redirects.

Setting redirect limits on your server will prevent loops by stopping redirects after several steps. Be also aware of any conflicts in your redirecting rules to avoid such cycles. Monitor and test your redirects for problems before they hit end-users.

Our Journey

Problems we faced?

In high-volume content environments where multiple product URLs are embedded across blogs, redirect management becomes increasingly complex that drive significant traffic. However, when these product URLs change, we currently add redirects to the existing links to ensure the traffic remains uninterrupted and the blogs don’t need to be updated manually.

Let’s Make Your Website Faster and Error-Free

F22 Labs ensures your website runs smoothly by detecting and repairing redirection issues before they affect users.

With around 500 blogs, each featuring approximately 10 products, we manage roughly 5,000 URLs. To address this, we need a more stable solution than simply adding redirects. Previously, redirects were added incrementally without a centralized tracking structure, resulting in redundant chains and unintended circular paths, which resulted in a large number of redirects with no system to track them. This lack of organization led to redundant URLs and even caused redirect loops.

How Did We Fix It?

This outline provides a broad overview of how to resolve the issue. In our case, the number of links was overwhelming, with some products having more than seven redirects tracked in a spreadsheet. Due to name changes suggested by the SEO team and modifications made by various people, redirect loops started to occur. To resolve this systematically, a structured script was implemented that:

Extracted all the data from the spreadsheet, converted the CSV format to JSON, and transformed it into the required format.

Followed the format mentioned above, counted the redirects for each row, and created a loop to ensure all redirects pointed to the same destination URL.

Merged the old and new JSON files, removing any duplicate entries from the old redirects based on the initial redirects.

Generated the final JSON and updated the entire redirects list. Redirect loops are not just technical annoyances; they directly impact SEO performance, crawl budget allocation, and user trust.

A structured redirect strategy, centralized tracking, rule consolidation, and periodic auditing prevent performance degradation and ranking instability.

When redirect logic is managed systematically rather than reactively, websites maintain clean architecture and stronger search visibility.

Issues faced?

The primary challenge involved processing high-volume redirect data efficiently while preventing memory overload and JSON structure inconsistencies, which made it challenging for the script to function efficiently. The process often consumed a significant amount of RAM and generated a large number of objects. Comparing JSON data from old and new redirects was also problematic; occasionally, functions would malfunction due to discrepancies like leading slashes.

How Did We Test It?

Validation involved manually sampling URLs and verifying redirect paths using https://www.redirect-checker.org/ to confirm resolution and loop elimination.

Frequently Asked Questions?

What causes redirect loops?

Redirect loops occur when conflicting redirect rules create circular paths, or when long chains of redirects end up where they started.

How do redirects affect SEO?

Proper redirects maintain link value, ensure correct indexing, and prevent duplicate content issues. They help preserve search rankings when implemented correctly.

How can we fix redirect loops?

Audit your redirects, simplify configurations, ensure each rule is correct, use redirect checker tools, and set server-side redirect limits to prevent infinite loops.